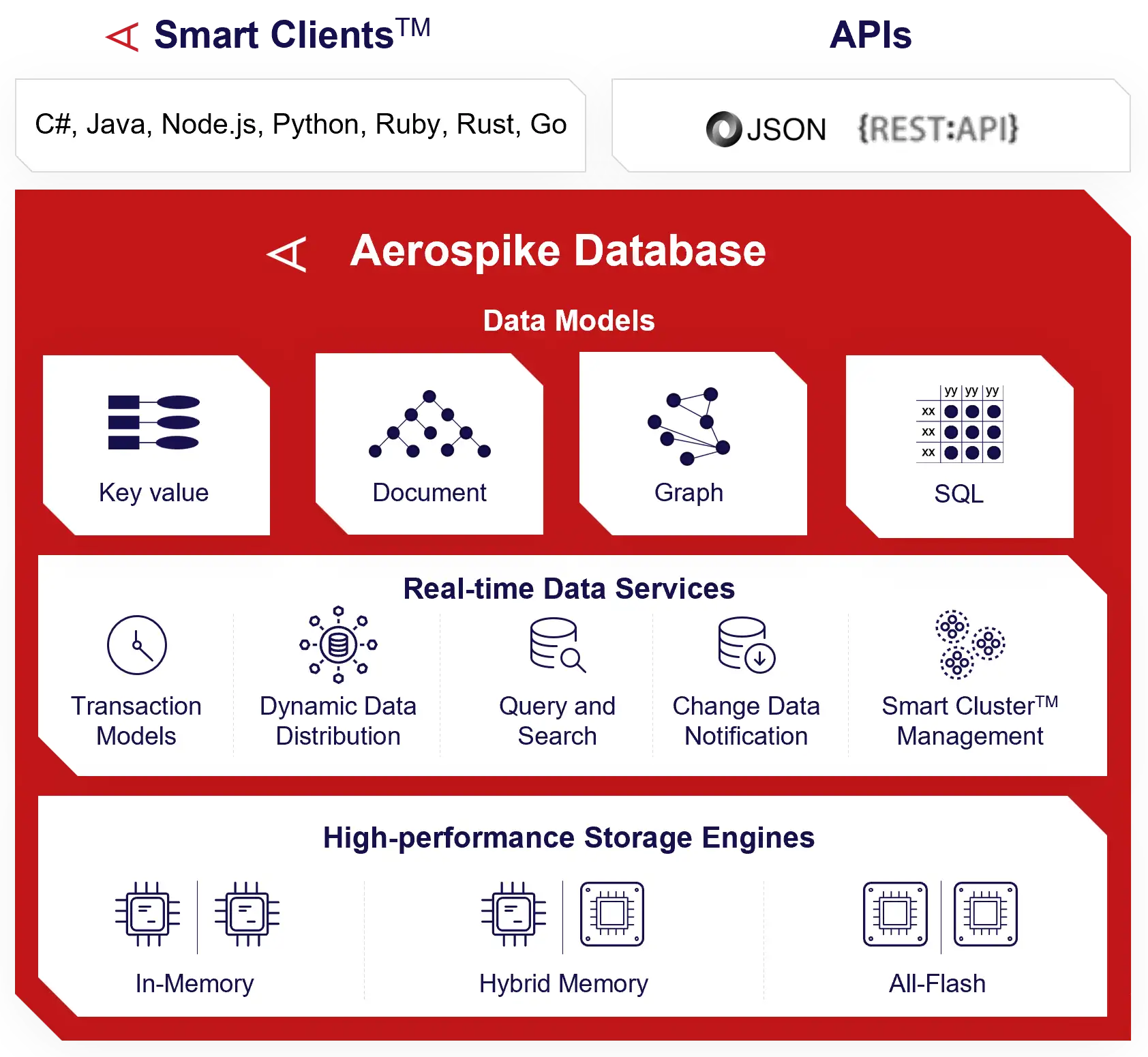

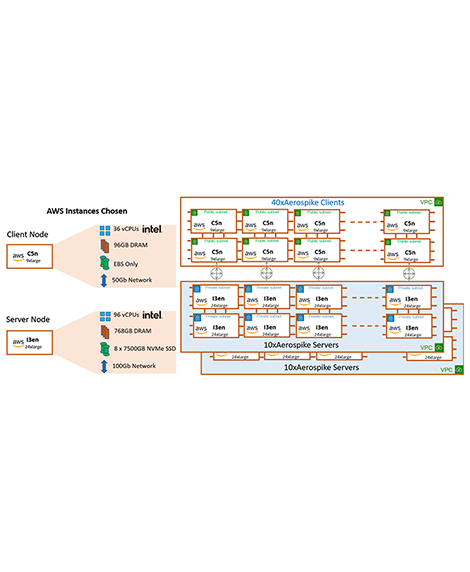

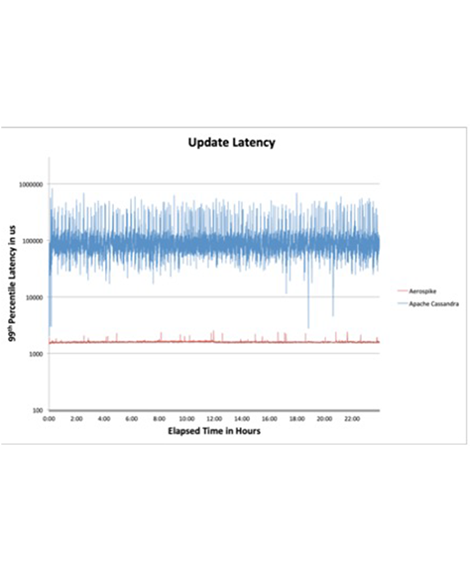

Aerospike Database 7 is the engine of the Aerospike real-time data platform. Unlike other NoSQL systems, Aerospike delivers predictable performance, scales from gigabytes to petabytes, is strongly consistent, with unparalleled cross-datacenter replication for a true globally distributed real-time database. It can be deployed in any public, private, or multi-cloud environment and is also available as a managed service.

Aerospike Database 7 introduces a unified storage engine format for in-memory, all-flash, and hybrid memory storage engines, giving customers maximum flexibility and cost efficiencies no matter which storage engine they choose.