Predictable high performance

Aerospike has consistently demonstrated a per server transaction rate of at least 1M transactions per second (TPS). Beyond being highly performant, Aerospike is predictably performant, delivering high write throughput at low latencies, enabling enterprises to more easily build larger-scale applications at a lower cost.

To attract, retain, and engage consumers with rich, customized experiences and features, enterprises need to use real-time big data and decisioning to modernize their application structures. In most cases, applications need to not only have immediate access to a large quantity of data, but also be able to access specific data across the database in the presence of a heavy write load. Real-time decisioning requires a multi-component technology stack comprised of big data systems and intelligent applications. A highly and predictably performant database should form the core for this stack in order to maintain maximum performance and prevent damage to the brand.

Aerospike’s real-time engine, which has been in production since 2010, is built on technology that ensures predictability by delivering low latency alongside high write throughput and a sufficient level of consistency, including:

- Smart Client Architecture: Aerospike’s smart client architecture ensures parallel access to multiple servers in a cluster, allowing each client to access individual records without central coordination or a single point of failure. By making sure there is only one network hop, network jitter is minimized. The client maintains independent, per-server data structures and connection pools, so latency does not amplify as the cluster grows. No load balancers are required because the client takes on the responsibility of evenly spreading requests across the responsible nodes.

- Hybrid Memory Architecture: Aerospike’s hybrid memory architecture uses a highly parallel DRAM index, reducing lock contention and potentially unpredictable storage reads. The flash-optimized Aerospike storage layout reduces the work performed on storage devices, guaranteeing exactly one storage request per database request and reducing storage system jitter. Background maintenance tasks also use the DRAM index, reducing the effects of unpredictable background processes.

- C Programming Language: Aerospike uses the C programming language to optimize internal CPU caches, data paths, and memory accesses. Unlike Java-based databases, which vary in performance based on the installed Java VM and its tuning parameters, custom memory allocators provide stable response time. Continual background processes handle data eviction, expiration, and migration.

Database performance must not only be predictable, but also high. Accordingly, Aerospike provides industry-leading performance across DRAM and flash hardware in both bare metal and cloud deployment environments, with Aerospike performing even better on virtual machines than bare metal. And snapping in new instances—virtual machine-based or container-based—gives you amazing parallel performance without operational downtime.

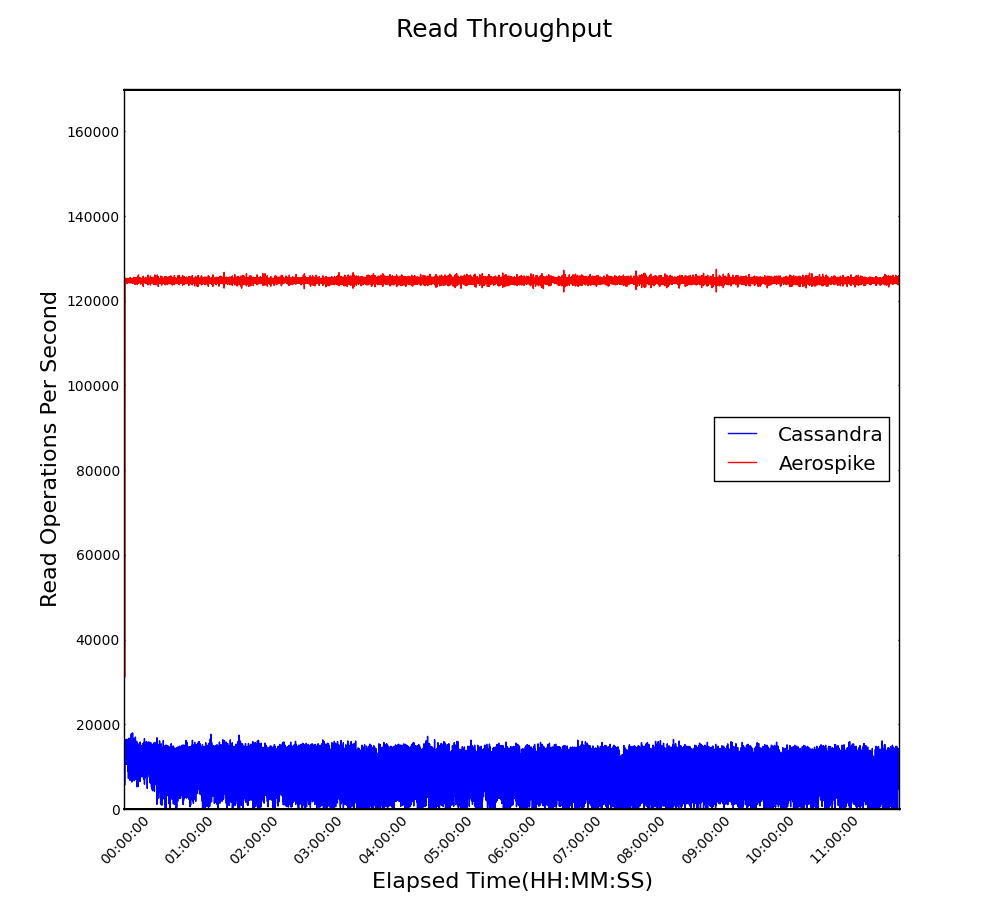

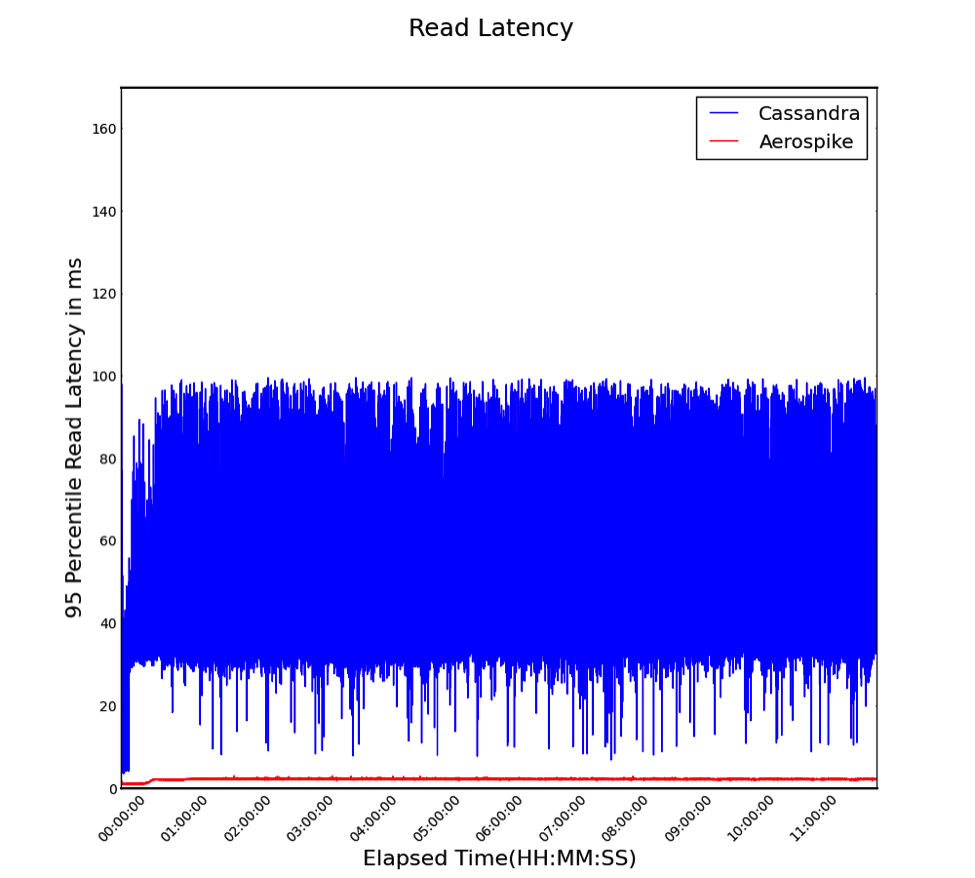

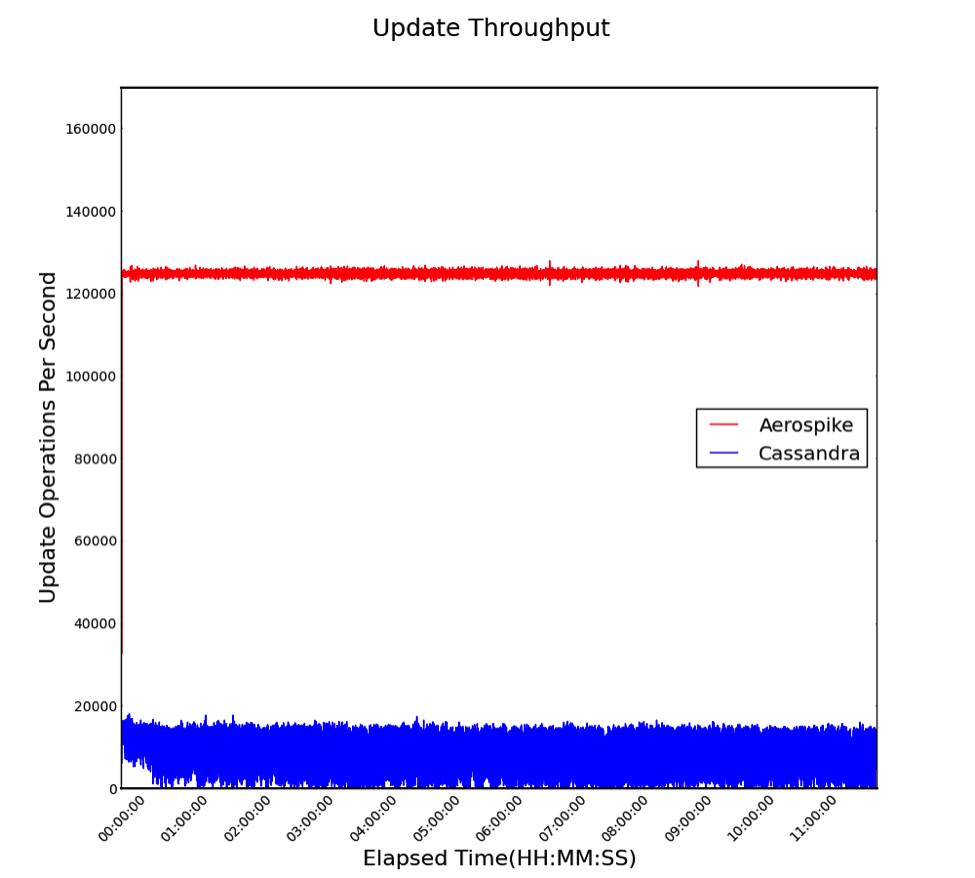

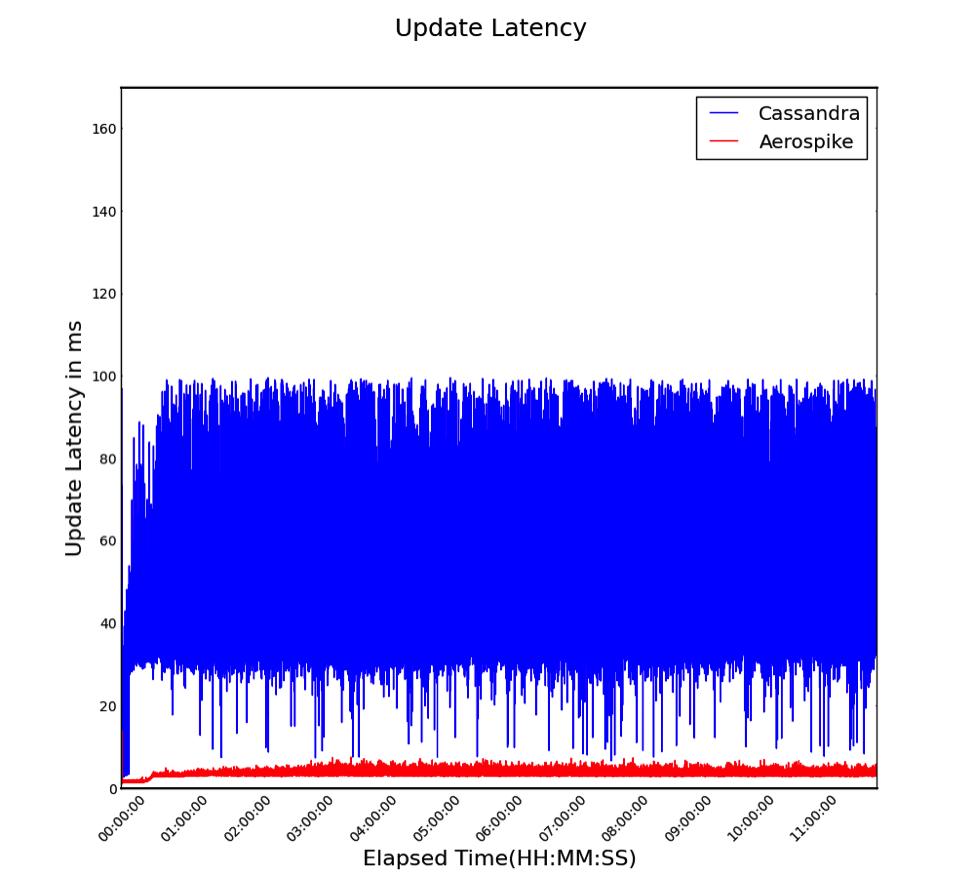

Illustrating our performance capabilities, the below graphs show that Aerospike performed 14x faster than a competing NoSQL database with 42x lower latency and higher predictability in a 2016 benchmark.