Dynamic Cluster Management

Automatic storage and exchange of node information including changes and status with automated triggers for rebalancing.

Uniform distribution of data, associated metadata like indexes, and transaction workload make capacity planning and scaling up and down decisions precise and simple for Aerospike clusters. Aerospike needs redistribution of data only on changes to cluster membership. This contrasts with alternate key range based partitioning schemes, which require redistribution of data whenever a range becomes “larger” than the capacity on its node.

Aerospike’s dynamic cluster management handles node membership and ensures that all the nodes in the system come to a consensus on the current membership of the cluster. Events such as network faults and node arrival or departure trigger cluster membership changes. Such events can be both planned and unplanned. Examples of such events include randomly occurring network disruptions, scheduled capacity increments, and hardware/software upgrades. The specific objectives of the cluster management subsystem are to:

- Arrive at a single consistent view of current cluster members across all nodes in the cluster,

- Automatically detect new node arrival/departure and seamless cluster reconfiguration,

- Detect network faults and be resilient to such network flakiness,

- Minimize time to detect and adapt to cluster membership changes.

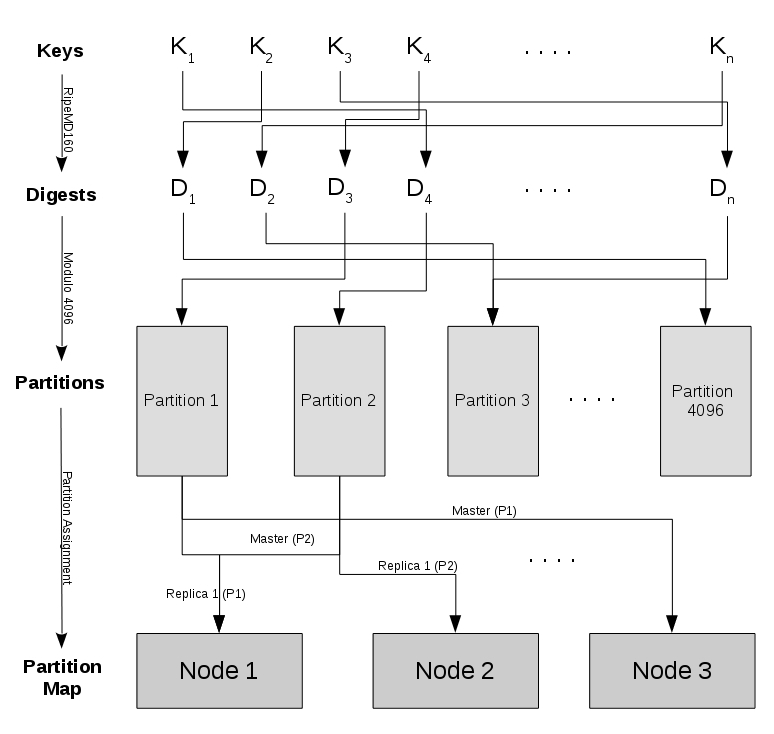

Aerospike distributes data across nodes as shown in Figure 1 below. A record’s primary key is hashed into a 160-byte digest using the RipeMD160 algorithm, which is extremely robust against collisions. The digest space is partitioned into 4096 non-overlapping “partitions”. A partition is the smallest unit of data ownership in Aerospike. Records are assigned partitions based on the primary key digest. Even if the distribution of keys in the key space is skewed, the distribution of keys in the digest space—and therefore in the partition space—is uniform. This data partitioning scheme is unique to Aerospike. It significantly contributes to avoiding the creation of hotspots during data access, which helps achieve high levels of scale and fault tolerance.

Figure 1. Data Distribution

Aerospike indexes and data are colocated to avoid any cross-node traffic when running read operations or queries. Writes may require communication between multiple nodes based on the replication factor. Colocation of index and data, when combined with a robust data distribution hash function, results in uniformity of data distribution across nodes. This, in turn, ensures that:

- Application workload is uniformly distributed across the cluster,

- Performance of database operations is predictable,

- Scaling the cluster up and down is easy, and

- Live cluster reconfiguration and subsequent data rebalancing is simple, non-disruptive and efficient.

The Aerospike partition assignment algorithm generates a replication list for every partition. The replication list is a permutation of the cluster succession list. The first node in the partition’s replication list is the master for that partition, the second node is the first replica, the third node is the second replica, and so on. The partition assignment algorithm has the following objectives:

- To be deterministic so that each node in the distributed system can independently compute the same partition map,

- To achieve uniform distribution of master partitions and replica partitions across all nodes in the cluster, and

- To minimize movement of partitions during cluster view changes.